As artificial intelligence workloads continue to scale, traditional data center infrastructure is being pushed to its limits.

At the center of this transformation is the AI rack — a highly integrated system designed to support extreme compute density, ultra-fast data movement, and advanced thermal management.

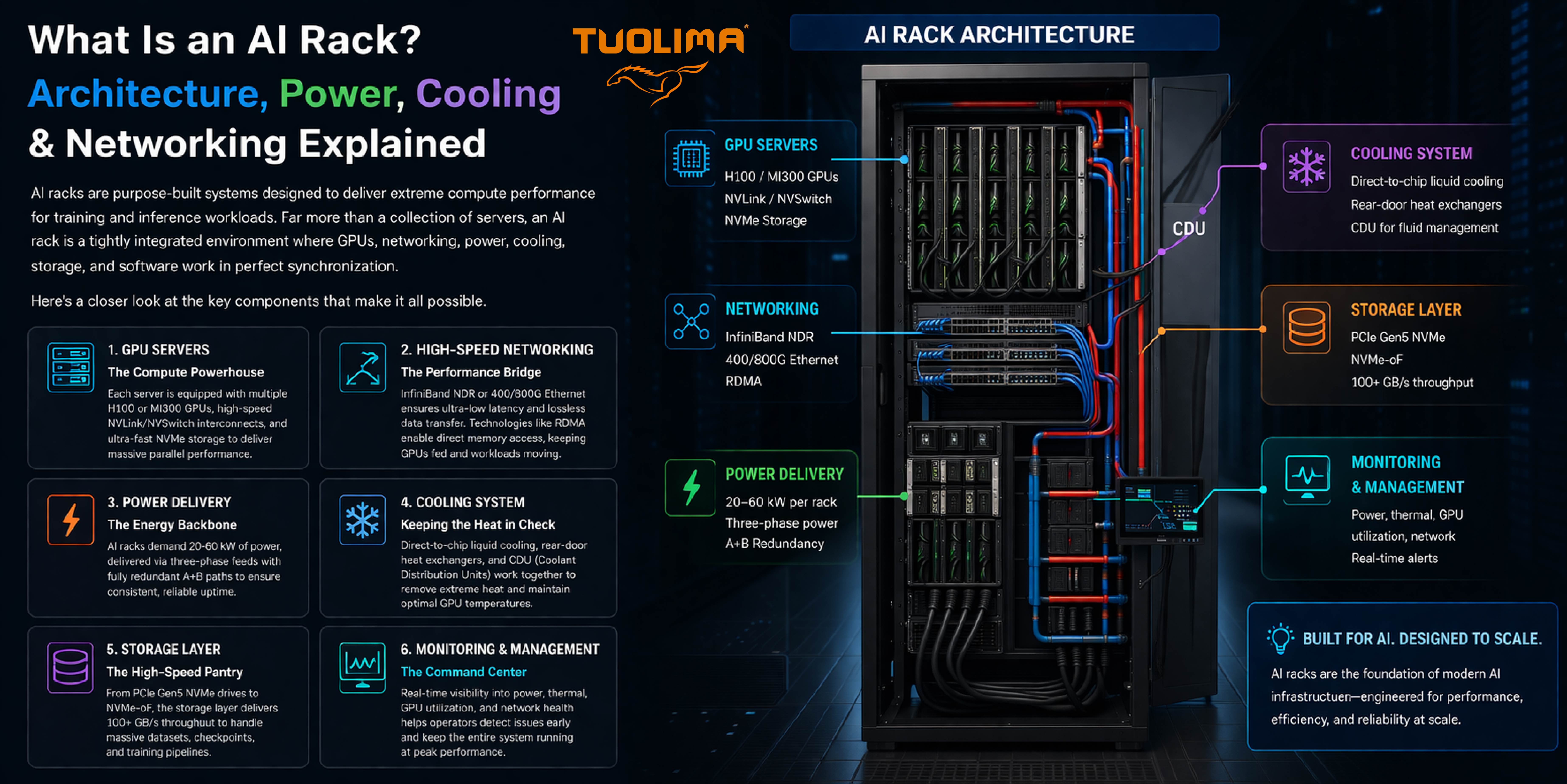

Unlike conventional server racks, AI racks are engineered as complete infrastructure units, where compute, networking, power delivery, cooling, and storage must operate in tight coordination.

This article breaks down the architecture of an AI rack and explains how each component contributes to high-performance AI workloads.

An AI rack is a high-density computing system specifically designed to support artificial intelligence training and inference workloads.

It typically includes:

GPU-accelerated servers

High-speed networking fabric

Advanced power distribution systems

Liquid or hybrid cooling infrastructure

High-performance storage systems

Real-time monitoring and management tools

Rather than functioning as independent components, these elements form a tightly coupled ecosystem optimized for throughput, latency, and reliability.

At the heart of every AI rack are GPU servers, which provide the computational power required for AI training and inference.

Modern AI servers are built with:

Multiple high-performance GPUs (such as H100 or MI300-class architectures)

High-bandwidth interconnects like NVLink or NVSwitch

Ultra-fast NVMe storage for local data access

These systems are designed for parallel processing at scale, enabling massive workloads such as large language models and computer vision training.

However, this performance comes at a cost — significant power consumption and heat generation.

One of the defining characteristics of AI racks is their power demand.

Typical configurations require:

20 kW to 60 kW per rack

Three-phase power input

Redundant A+B power paths

This level of power density requires careful planning of:

Power distribution units (PDUs)

Cabling infrastructure

Redundancy and failover mechanisms

In modern deployments, power is no longer just a utility — it becomes a critical design constraint.

AI workloads depend on fast and efficient data movement between compute nodes.

To support this, AI racks utilize:

InfiniBand (NDR and beyond)

400G / 800G Ethernet

RDMA (Remote Direct Memory Access) technologies

These technologies reduce latency and CPU overhead, allowing GPUs to communicate directly and efficiently.

In AI environments, networking is not just connectivity — it is an extension of the compute architecture.

As power density increases, traditional air cooling becomes insufficient.

AI racks increasingly rely on:

Direct-to-chip liquid cooling

Rear-door heat exchangers

Coolant Distribution Units (CDUs)

These systems remove heat more efficiently and maintain stable operating conditions for GPUs.

Without proper cooling, systems may experience:

Thermal throttling

Reduced performance

Hardware failure

Cooling is therefore a mission-critical component of AI infrastructure.

AI workloads generate and process massive volumes of data.

To keep GPUs fully utilized, storage systems must deliver:

PCIe Gen5 NVMe performance

NVMe over Fabrics (NVMe-oF)

High throughput (100+ GB/s in aggregated systems)

Efficient storage architecture ensures that data pipelines remain unblocked and compute resources are not underutilized.

Given the complexity of AI racks, real-time monitoring is essential.

Key metrics include:

Power consumption

Temperature and thermal distribution

GPU utilization

Network throughput and congestion

Advanced monitoring systems enable predictive maintenance and rapid fault detection, reducing downtime and improving operational efficiency.

An AI rack is not simply a collection of high-performance components.

It is a systems engineering solution, where:

Compute performance

Power availability

Thermal efficiency

Data movement

must be optimized together.

As AI workloads continue to evolve, data centers must transition from traditional IT infrastructure to integrated, high-density, and high-efficiency designs.

AI racks represent a fundamental shift in how data centers are designed and operated.

Organizations that adopt AI infrastructure must rethink:

Power capacity planning

Cooling strategies

Network architecture

Physical layer design

Those who successfully adapt will be better positioned to support the next generation of AI-driven applications.

Related Products

IP68 Fiber Optic Splice Closures — Designed to provide secure and reliable protection for fiber optic joints

Aug 02-2024

Enhancing Campus Connectivity: GJYXCH Fiber Optic Cable in Educational Networks

Jan 09-2024

Elevating Urban Infrastructure: GJYXCH Fiber Optic Cable in Smart City Networks

Jan 07-2024